Shift Group

Turning AI tools inward to evaluate and optimize the product they power

92%

output accuracy maintained with 60% fewer questions5 days

from first experiment to validated recommendation

Shift Group's recruiting platform helps athletes and veterans transition into business careers. Their onboarding relied on an 11-question self-discovery questionnaire that powered an AI tool to generate personalized candidate profiles. But a lot of candidates were dropping off before completing it. The instinct was to cut questions. The problem was knowing which ones.

OBLSK came in to answer a specific question with evidence, not guesswork: which inputs actually drive the quality of the AI-generated output? Over five days, we ran six structured experiments, using large language models as both analytical tools and evaluators. The work produced both a recommendation and a repeatable methodology.

Challenge

- Candidates were abandoning the questionnaire before completion, blocking the AI tool from generating additional profile data

- Eleven open-ended prompts created significant friction before candidates could proceed

- Simply cutting questions based on instinct risked degrading the output. Shift Group needed evidence to justify changes to a core part of their product

Solution

- Designed and ran six LLM-powered experiments over five days, each informed by the results and limitations of the previous:

- Influence scoring: used ChatGPT as automated judges to score each question's impact on the scouting report, iterating from subjective 0–5 scoring to reliable binary scoring when early results proved inconsistent

- Question utility analysis: prompted the LLM to evaluate whether each question contributed unique insights or overlapped with other responses

- Reverse engineering: independently prompted the AI to work backward from completed scouting reports to identify the most essential questions, which converged on the same themes as the forward analysis

- Validation: generated additional profile data from the reduced four-question set and scored accuracy against full 11-question outputs using automated model-graded evaluation

Result

- Delivered a clear, reproducible answer to Shift Group's question about whether the questionnaire could be significantly reduced while still generating profile data of the same quality

- Reports generated from four inputs achieved 92% accuracy against the originals, representing a reduction of more than 60% in questionnaire length with no meaningful loss in output quality

- Proved that rigorous, evidence-backed experimentation doesn't require a quarter-long research engagement. We completed six experiments in five days, producing reproducible results

- Established a transferable methodology for any product where input complexity creates user friction, including lead qualification forms, intake workflows, product configurators, or any AI-driven process where you need to know which inputs actually matter

Type

- AI optimization

Project duration

- 5 days

Technologies used

- OpenAI API

- Inspect AI

- Next.js

- PostgreSQL

Check Out More Work

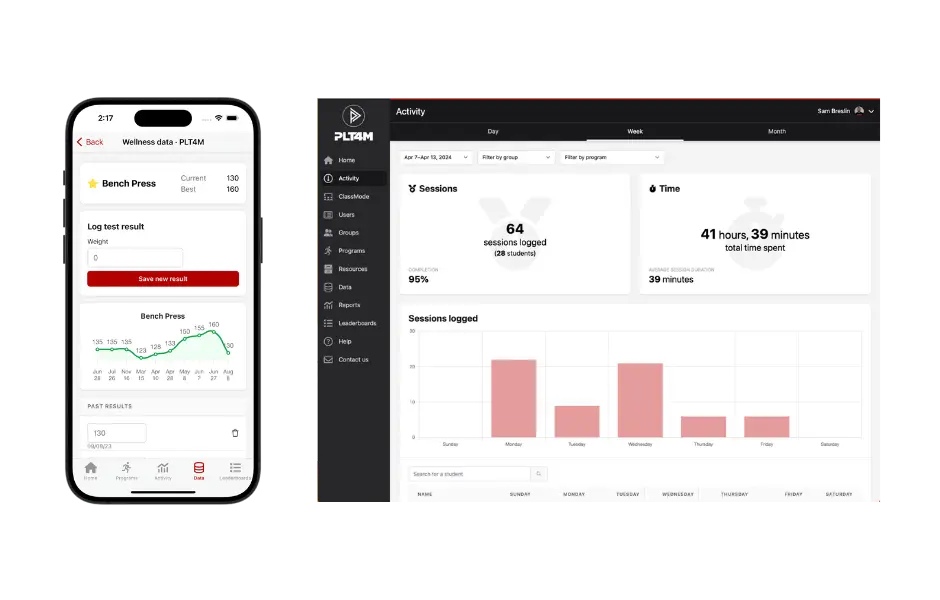

Rescue and digital transformation of an educational fitness product

OBLSK stabilized the platform and made bug fixes and feature enhancements until the business was ready for a full product rewrite. The rebuild allowed PLT4M to expand new product initiatives and land larger deals.

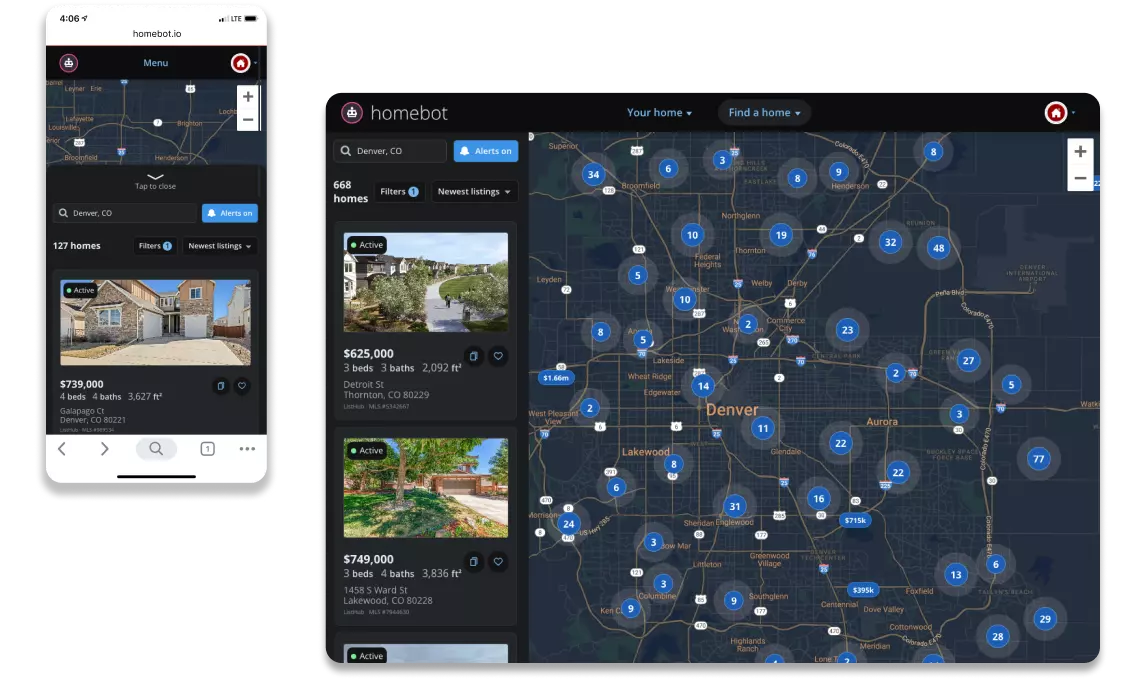

Integrating a residential real estate listings platform with a home equity valuation product

Homebot acquired Nest Ready, a residential real estate listings platform, that needed to be fully integrated with Homebot’s home equity valuation product. OBLSK was asked to integrate this acquired tech product in 100 days.